Laravel: Pub-Sub Messaging with Apache Kafka

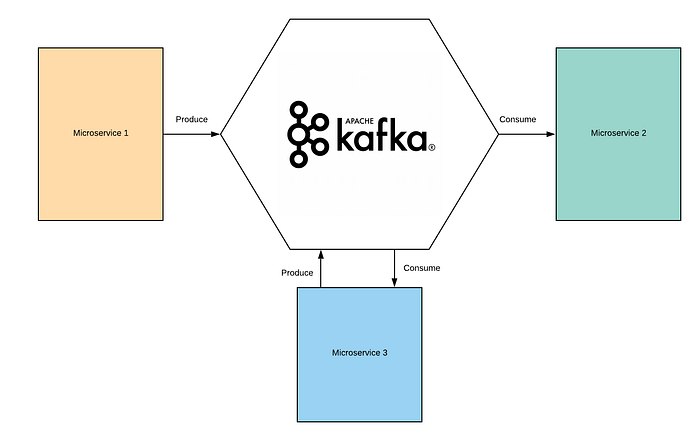

Microservices communication is usually done by two protocols. In most cases, it is performed by HTTP request/response with resource APIs (i.e. REST), and lightweight asynchronous messaging (i.e. Pub/Sub pattern) when communicating updates between several microservices.

Pub/Sub is a messaging pattern where the senders (Publishers) publish messages to the topics without knowing whether receivers exist (Subscribers) which are managed by a broker. The receivers subscribe to the topics they are interested in. When brokers receive new messages, they broadcast them immediately to all subscribers.

Pub/Sub pattern can be implemented using various tools. Selecting the right tool depends on the project size and requirements. Here are some tools you can use to implement the Pub/Sub.

- Apache Kafka

- RabbitMQ

- Redis

In this blog post, I am going to show you how to integrate Apache Kafka with Laravel in a microservice architecture.

Kafka terminologies:

Topic: a topic is like a category/an index, it groups messages together.

Producer: processes that push messages to Kafka topics.

Consumer: processes that consume messages from Kafka topics.

Partition: an immutable sequence of topic messages that is continually appended to a structured commit log.

Kafka broker: one or more servers that form a Kafka cluster.

Setup:

Two microservices will be used to demonstrate the Pub/Sub mechanism. Microservice 1 (Pub) will be used to ingest inventories and push the data to a Kafka topic. On the other hand, Microservice 2 (Sub) will be used for metrics and reporting. It will consume inventories data from the Kafka topic.

Prerequisites:

- Docker

- Docker-compose

- librdkafka (Already provided with the docker image)

- PHP-rdkafka (Already provided with the docker image)

Lets setup Microservice 1 (Pub):

1. Install Docker and Docker-compose in your machine

2. Create a custom docker network (pub_sub_network) for this tutorial. This will enable external communication between two microservices.

docker network create pub_sub_network3. Clone repo from https://github.com/anam-hossain/laravel-kafka-pub-example

git clone https://github.com/anam-hossain/laravel-kafka-pub-example.git4. Copy the .env.local to .env

5. Ensure that KAFKA_BROKERS=kafka:9092 added to .env file

6. Run docker-compose up -d inside the repo directory.

7. Log in to the microservice 1 container

docker-compose exec kafka_producer_php sh8. Install composer packages

composer install9. Run the database migrations

php artisan migrate10. Browse http://localhost:8787 to verify that microservice 1 is up and running.

Microservice 2 (Sub):

1. Ensure that microservice 1 is up and running as Apache Kafka is installed in microservice 1.

2. Open another tab/window in your terminal. Do not close microsevice 1 terminal.

3. Clone repo from https://github.com/anam-hossain/laravel-kafka-sub-example

git clone https://github.com/anam-hossain/laravel-kafka-sub-example.git4. Copy the .env.local to .env

5. Ensure that KAFKA_BROKERS=kafka:9092 added to .env file

6. Run docker-compose up -d from repo directory.

7. Log in to the microservice 2 container

docker-compose exec kafka_consumer_php sh8. Install composer packages

composer install9. Run the database migrations

php artisan migrate10. Browse http://localhost:8788 to check microservice 2 is up and running

11. Once confirmed that the service is up and running, go back to the terminal and run the following command to start the Kafka consumer:

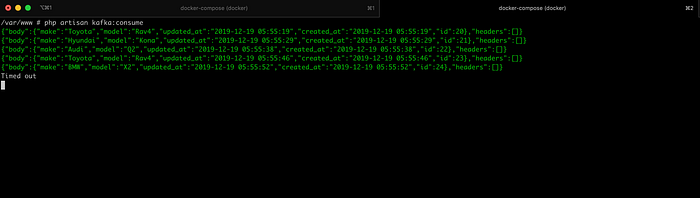

php artisan kafka:consumekafka:consume is a long-lived process which is constantly communicating with Kafka for new data. Go to the below demo section and do not kill the kafka:consumecommand.

Demo:

Let’s ingest some inventories via microservice 1. This will trigger Laravel eloquent model events to push messages to Kafka. During ingestion, keep an eye on microservice 2 terminal. You will see messages immediately consumed by the Kafka consumer.

Data ingestion via Microservice 1:

Payload 1:

http://localhost:8787/inventories?make=Toyota&model=Rav4

Payload 2:

http://localhost:8787/inventories?make=Hyundai&model=Kona

Payload 3:

http://localhost:8787/inventories?make=Audi&model=Q2

Payload 4:

http://localhost:8787/inventories?make=Toyota&model=Rav4

Payload 5:

http://localhost:8787/inventories?make=BMW&model=X2

Now if you switch to microservice 2 terminal, you will see payloads are being immediately consumed by the Kafka consumer.

Now if you browse http://localhost:8788/stats you will see something like the following:

Congratulations, we have just built an application using Apache Kafka with Laravel. Let me know what you guys think.